The conversation around AI in education has become strangely narrow at the very moment the technology is becoming more powerful. Too often, the discussion revolves around whether lecturers are using ChatGPT, whether students are over-relying on AI, or whether institutions have adopted the latest digital tool, as though adoption itself were evidence of progress. Yet the most consequential question is not whether AI is present in the classroom, but whether it is being used to improve the quality of thought, the realism of learning, the coherence of teaching, and the capacity of educators to do demanding academic work well. That is where the work of award-winning Malaysian educator Inv. Galvin Lee Kuan Sian becomes interesting, not simply as a profile of one lecturer building AI-related initiatives, but as a useful lens through which to ask what EdTech should actually be trying to achieve in the first place. Lee serves as a lecturer of marketing and economics at a top private college in Malaysia, and is also a PhD candidate at the Asia-Europe Institute of Universiti Malaya pursuing research in consumer behaviour. At the same time, his wider body of public-facing work positions him at the intersection of pedagogy, innovation, and digital learning design.

What makes that worth examining is not merely the existence of projects with AI in them, because educational technology is already full of pilots, demos, and shiny interfaces that produce more excitement than value. The more important point is that Lee’s work appears to be organised around a stronger idea, that AI matters when it changes the design of learning, rather than merely accelerating the production of educational content. That distinction is critical. A lecturer can use AI to generate slides, summaries, quiz questions, and feedback comments. Still, if the learning experience remains passive, fragmented, and cognitively thin, then the technology has not transformed anything important. It has simply made an old model faster. By contrast, when AI is used to create richer practice environments, support better instructional decisions, and help educators maintain rigour under real workload pressure, it begins to justify the attention it has attracted. That broader direction is also consistent with how major international bodies now frame the issue: UNESCO stresses a human-centred approach to AI in education, while the OECD’s latest education outlook argues that generative AI supports learning most effectively when guided by clear teaching principles rather than deployed as a free-floating shortcut.

This is where the reader can draw the first serious lesson. The real unit of innovation in education is not the tool. It is the learning design. That sounds almost obvious, yet the EdTech sector still behaves as though novelty were enough. In practice, students do not benefit very much from AI simply because it can answer questions quickly. They benefit when AI helps construct a setting in which they must observe more carefully, compare more critically, justify more explicitly, and apply knowledge under more realistic conditions. A great deal of weak AI adoption in education fails precisely because it confuses information access with intellectual development. Students may end up producing more fluent outputs while doing less original reasoning, and institutions may celebrate the appearance of innovation while the underlying pedagogy remains unchanged. The sharper test is therefore whether AI raises the standard of thinking demanded from learners, or merely lowers the friction of producing something that looks finished.

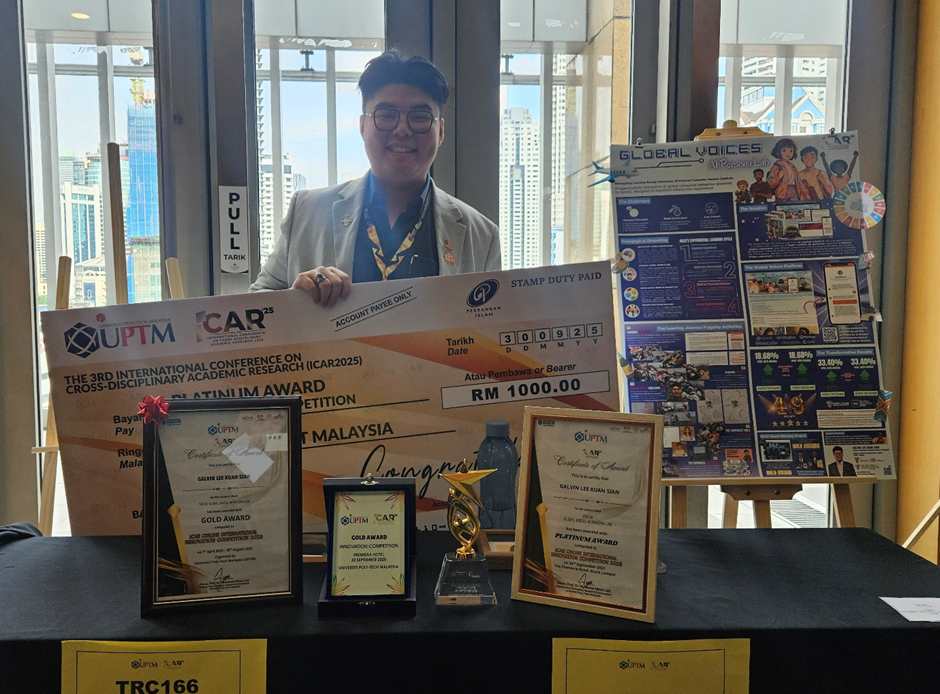

That is one reason Lee’s portfolio is more interesting when treated as evidence of a philosophy rather than as a catalogue of projects. His EdTech initiatives developed at the Galvin Lee Innovation Lab, which includes the Global Voices: AI Persona Lab, Cafe Conquest: Beach Breeze, The ShopQuest Mall, and IntelLect: Intelligent AI Suite for Lecturers, suggest an attempt to move AI beyond the generic classroom chatbot and toward simulation, applied decision-making, lecturer workflow support, and flexible delivery design. The details of each project matter less, in this context, than the pattern they form. The pattern suggests that AI is most valuable when it is embedded inside a broader educational architecture, where it supports how students practise, how lecturers design, and how teaching quality is sustained across different contexts. That is a much more demanding and useful vision than the now-familiar model in which AI acts merely as a faster writing assistant. Through this vision, Lee has been widely recognised for his achievements in international education innovation competitions with numerous gold medals, and most notably, having been named the “Lecturer of the Year” and “Innovative Educator of the Year” at the Global Education Awards 2025.

The second lesson for readers is that the future of AI in education may depend just as much on teacher-side infrastructure as on student-facing tools. This is the part of the conversation that receives far too little attention. Schools and universities often talk about what AI can do for student engagement, personalised learning, or tutoring, but much less about what educators are actually dealing with on the ground. Teaching does not consist only of delivering content. It involves curriculum mapping, lesson design, assessment construction, moderation, documentation, rubric development, quality assurance, feedback, student consultation, and constant revision under tight time constraints. If AI enters this environment merely as another platform to manage, it can make the system noisier rather than better. If, however, it genuinely reduces design friction while preserving academic judgment, it can help create the conditions under which better teaching becomes possible. In other words, one of AI’s most credible roles in education may be less glamorous than the industry likes to admit: helping educators remain rigorous, coherent, and responsive in systems that often stretch them too thin.

That point becomes even more important when we consider flexible and hybrid teaching, an area where many institutions have already learned that technology alone does not solve pedagogical problems. At the institution where Lee lectures, the HyFlex (Hybrid-Flexible) methodology sits at the heart of forward-looking curriculum delivery, with heavy use of AI-assisted independent learning. HyFlex, blended learning, and other flexible delivery models are often treated as logistical arrangements, when in reality they are design problems of a very high order. Once students are distributed across modes, the educator has to think much more carefully about attention, participation, sequencing, interaction, cognitive load, and the fairness of the learning experience itself. Poor flexible teaching usually does not fail because the platform is inadequate. It fails because the pedagogy has not been redesigned to account for complexity. Lee’s HyFlex work is relevant here not because it offers a branded answer to a trend, but because it reflects a more useful principle: flexible education works only when delivery, activity, and assessment are engineered as one coherent system. In that setting, AI becomes valuable not because it makes teaching look futuristic, but because it can help sustain quality across a more demanding and distributed learning environment.

The third lesson is that EdTech becomes more defensible when it creates authentic practice rather than simply automating academic routines. One of the persistent weaknesses in higher education is that students often learn concepts in isolation from the kinds of messy, judgment-heavy contexts in which those concepts are supposed to matter. This is especially true in business, marketing, and consumer behaviour, where students can memorise frameworks without ever meaningfully rehearsing how those frameworks would be used in a real decision environment. AI has genuine promise here, particularly when it is used to build simulated interactions, scenario-based learning spaces, and decision environments that force students to interpret signals rather than merely restate definitions. The significance of this is easy to miss. Educational depth does not come from making knowledge easier to retrieve. It comes from making it harder and more necessary to use. That is where AI can move beyond convenience and start contributing to something closer to expertise formation.

A fourth lesson, and perhaps the most important for the education sector, is that AI should not be adopted as a prestige marker. There is already a temptation across institutions to treat AI as a branding exercise, a symbol of modernity that can be announced, showcased, and publicised. That impulse is understandable, but it is risky because it rewards visibility over depth. The better question for educators, institutions, and EdTech founders is not whether a classroom or platform contains AI, but whether the technology has improved the educational experience in ways that are observable, defensible, and durable. Has it strengthened feedback quality? Has it created more authentic practice? Has it improved coherence across curriculum, activity, and assessment? Has it reduced meaningless workload while preserving academic standards? Has it widened access without hollowing out learning? These are much tougher questions than “Are we using AI?”, but they are also the only questions that matter if the goal is educational value rather than digital theatre.

This is where the broader policy conversation becomes useful rather than abstract. UNESCO has warned repeatedly that AI in education must remain ethical, human-centred, and attentive to inequality. At the same time, OECD work on generative AI emphasises the centrality of teacher guidance, safety, accountability, and instructional purpose. Those concerns are not administrative footnotes to innovation. They are part of the definition of good innovation. AI in education should not weaken teacher agency, obscure how learning is being shaped, or deepen the gap between students who know how to use such tools critically and those who do not. If anything, the arrival of AI makes teacher expertise more important, not less, because someone still has to decide what counts as good thinking, good evidence, good pedagogy, and good judgment. The technology may assist, but it cannot define those standards on its own.

Seen in that light, the value of Lee’s work is not primarily that it demonstrates one educator building projects with contemporary tools. Its deeper value is that it points toward a more serious standard for the EdTech sector. It suggests that the future of AI in education will belong less to those who adopt the most tools and more to those who understand how to redesign learning around what still matters most: human judgment, authentic practice, pedagogical coherence, and responsible scale. That is a more demanding vision than the current market hype, but it is also a more credible one. If AI is going to have a meaningful future in education, especially in Malaysia, it will not be because it made content generation easier. It will be because it helped educators build learning experiences that are harder to fake, easier to apply, and more deeply worth having.